Engineering and Technology

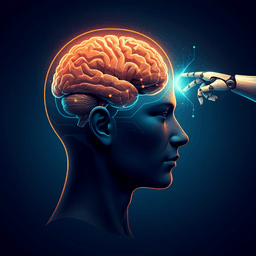

Human 0, MLLM 1: Unlocking New Layers of Automation in Language-Conditioned Robotics with Multimodal LLMs

R. Elmallah, N. Zamani, et al.

Discover the groundbreaking research conducted by Ramy ElMallah, Nima Zamani, and Chi-Guhn Lee, which explores automating human functions in language-conditioned robotics using Multimodal Large Language Models. Their experiments demonstrated impressive results with GPT-4 and Google Gemini, achieving over 90% accuracy in feasibility analysis. This study reveals how MLLM-IL has the potential to revolutionize the field.

Related Publications

Explore these studies to deepen your understanding of the subject.