Interdisciplinary Studies

Data-driven fine-grained region discovery in the mouse brain with transformers

A. J. Lee, A. Dubuc, et al.

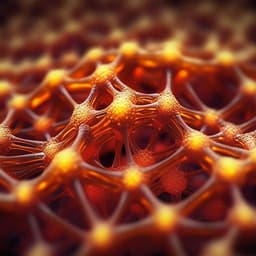

The study addresses the challenge of transforming large, high-dimensional spatial transcriptomics datasets into useful anatomical representations, particularly in the mouse brain. Hierarchical spatial organization is common in tissues, but existing computational methods often do not scale to multimillion-cell, multi-section datasets or require extensive prior neuroanatomical knowledge. The authors propose CellTransformer, a self-supervised transformer-based approach that learns latent neighborhood representations conditioned on cell type to discover spatial domains, aiming to produce data-driven, fine-grained brain parcellations comparable to and extending the Allen Mouse Brain Common Coordinate Framework (CCF). The work’s purpose is to enable organ-wide, scalable and interpretable domain discovery across multiple animals and modalities while minimizing dependence on manual annotations.

Existing spatial domain detection methods face scalability and resolution constraints. Approaches operating on whole tissue-section matrices often exceed GPU/RAM capacity due to large pairwise distance matrices, and Gaussian process-based methods scale cubically with observations. Some scalable methods (e.g., CellCharter, SPIRAL) either struggle to capture granular structure or require extensive batch correction and supervision. Other notable pipelines (e.g., scENVI, STACI, spaGCN, STAligner, STAGATE, GraphST) become infeasible at million-cell scales due to memory requirements (e.g., >60 TB for ~4M cells to store pairwise distances). Prior research has shown gene-expression-based clustering can parcellate brain regions, but integration across sections and discovery of fine-grained, spatially coherent domains remain challenging. The Allen Brain Cell Whole Mouse Brain (ABC-WMB) Atlas provides a multi-million cell MERFISH and scRNA-seq resource enabling evaluation of domain-detection tools against expert-derived CCF annotations at multiple levels (division, structure, substructure).

CellTransformer architecture: A graph transformer that learns latent representations of cellular neighborhoods by conditioning gene expression prediction of a masked reference cell on neighborhood context and cell type. Neighborhood definition: cells within a fixed-size box around a reference cell (85 µm for MERFISH datasets; 50 µm for Slide-seqV2). Input tokens per observed neighbor cell are constructed by concatenating embedded gene expression (two-layer MLP with GELU mapping to 192-d) and embedded cell type (learned embedding to 192-d), yielding 384-d per-cell tokens. A register (

• CellTransformer discovers spatially coherent, biologically meaningful domains at multiple resolutions (k=25/354/670 and finer k=1300), reproducing cortical laminar structure and identifying motor cortex Layer 4 consistent with recent evidence. • Spatial homogeneity: At k=670, CellTransformer achieves markedly higher single-cell neighborhood smoothness than CellCharter (+58.2%), SPIRAL (+4091.2%), k-means on cellular neighborhoods (+61.9%), and gene-expression-only k-means (+419.2%); smoothing has minimal effect, indicating embeddings are inherently smooth. • Discrete domain proportion: CellTransformer maintains high proportions of discrete domains across resolutions; comparator methods’ discreteness declines significantly at 354 and 670 domains. • Similarity to CCF: At subclass-level composition, CellTransformer yields superior maximum and matched Pearson correlations versus CCF across mid/fine resolutions. At cluster-level (5274 types), average Pearson correlation between CellTransformer and CCF domains is 0.853, with strong block structures (>0.9) in correlation matrices. NMI vs CCF at k=670 improves over CellCharter by ~13.4% and over SPIRAL by ~46.7%; similar gains without smoothing. • Stability analysis identifies k≈1300 as a practical resolution for fine-grained analyses, supporting hierarchical parcellations; inertia and instability curves’ second derivative crossing indicates k=1300. • Subiculum and prosubiculum mapping (k=1300): CellTransformer recovers dorsal subiculum three-layer strata (molecular, pyramidal, polymorphic) and dorsal/ventral organization, matching Ding et al.; detects dorsal-ventral gene expression gradients (e.g., Bnc2, Six3, Pax5) consistent with literature. • Superior colliculus: Identifies known sensory layers (zonal, superficial gray, optic) and unannotated subregions in intermediate gray/white with layer-specific enriched cell types (e.g., SCsg Gabrr2 GABAergic, SCop Sln glutamatergic; rare types Foxb1, Pitx2 markers), revealing laminar and medial-lateral gradients. • Midbrain reticular nucleus: Discovers four subregions absent in CCF, with dorsal-ventral gradients; domain-level neurotransmitter composition shows correlations for glutamatergic (r=0.89) and non-neuronal (r=0.81) type counts vs proportions, but not for GABAergic (r=-0.64). • Multi-animal integration (Zhuang 1–4; 1129 genes): High cross-animal consistency—at k≈630, 93.3% of domains common to the three largest datasets and 80.0% across all four; average Pearson correlation to CCF regions is 0.805 when fitting across animals, >0.7 when fitting per animal. • Linear probing indicates embeddings encode donor and spatial information: donor classification accuracy >94% for all animals; median absolute coordinate prediction error ~151 µm; performance correlates with per-mouse cell counts. • Slide-seqV2 generalization: With increased model capacity (10 encoder layers) and QC, CellTransformer identifies coherent domains (e.g., cortical layers, midbrain, piriform) at k=50; higher k reduces cross-section integration, likely due to variable density/read depth.

CellTransformer provides a scalable, self-supervised transformer-based framework that learns fixed neighborhood representations for organ-wide domain discovery in spatial transcriptomics. It achieves fine-grained, spatially coherent domains aligned with neuroanatomical ontologies (CCF) and recapitulates known structures and gradients in hippocampal formation and superior colliculus, while discovering plausible subregions in understudied areas (MRN). The approach integrates across tissue sections and animals without explicit batch modeling, suggesting robust learned features. Compared to recent scalable methods, CellTransformer maintains spatial coherence at high domain counts and delivers higher correspondence to CCF, indicating improved biological relevance. The transformer design enables future inclusion of additional modalities (cell-level neurophysiology, imaging connectomics, MRI) as tokens, offering a path toward richer anatomical maps and structure-function inference from multi-omics spatial data. The authors emphasize that discovered domains are operational, not normative, and that brain organization may include gradients rather than strictly discrete regions; nonetheless, the method satisfies neuroanatomical conventions for discrete parcellations and provides a practical foundation for data-driven tissue mapping.

The study introduces CellTransformer, a self-supervised transformer architecture that scales to multi-million-cell, multi-animal spatial transcriptomics datasets and discovers fine-grained, spatially coherent tissue domains. It matches and extends CCF-like parcellations, robustly integrates across sections and donors, and generalizes to Slide-seqV2. The method recovers known laminar and regional organization and uncovers putative subregions with interpretable gene and cell-type signatures. Future directions include improved positional encoding (e.g., generalized Laplacian, rotational invariance), handling arbitrary gene sets, probabilistic domain models, and incorporating additional modalities to further enrich neighborhood representations and enable comprehensive structure-function mapping.

Discovered domains are not asserted as normative and may reflect discrete parcellations of underlying gradients. The approach requires user-specified neighborhood radius and choice of k for clustering; domain stability varies with these parameters. GPU resources are needed for timely training and clustering, limiting accessibility compared to lightweight methods, though memory demands are lower than many graph-based pipelines requiring full pairwise matrices. Registration inaccuracies to CCF can depress ARI/NMI magnitudes. Optional Gaussian smoothing may erode fine laminar boundaries. Some comparator pipelines could not be run at scale due to out-of-memory constraints, limiting breadth of direct comparisons.

Related Publications

Explore these studies to deepen your understanding of the subject.