Engineering and Technology

Machine learning identifies scale-free properties in disordered materials

S. Yu, X. Piao, et al.

Disordered systems span periodic, quasi-periodic, and correlated/uncorrelated regimes and enable rich wave phenomena such as Anderson localization, broadband coupling/absorption, and disorder-induced topological transitions. Traditional exploration relies on low-order microstructural descriptors (e.g., n-point probabilities, percolation, cluster functions) and optimization methods but faces scalability limits due to combinatorial design freedom and the difficulty of computing higher-order descriptors. The research question is whether data-driven deep learning can learn and invert the complex mapping between disordered microstructures and wave localization, enabling rapid prediction and inverse design while revealing underlying structural principles. The authors propose deep convolutional neural networks (CNNs) to (i) predict localization from disorder and (ii) generate disorder from target localization, aiming to uncover intrinsic, possibly universal, microstructural statistics induced by the learning architecture and to leverage them for robustness in wave behavior.

Prior work has used analytical descriptors (n-point probabilities, percolation, cluster functions) to classify disorder and relate structure to wave properties, and inverse-design methods such as stochastic, genetic, and topological optimization to generate structures. However, these often focus on low-order statistics due to computational complexity, though even simple descriptors (e.g., hyperuniformity) have enabled disordered photonic bandgap materials. Deep learning has achieved success in pattern recognition and has been extended to physics problems—classifying crystals and topological order, learning phase transitions and order parameters, and inverse design of nanophotonic devices. Existing ML inverse-design strategies address non-uniqueness using forward–inverse training, reinforcement learning, or multiple networks per structural family. The present work adopts training an inverse network using a pretrained forward model, extending ML to disordered wave systems and connecting to concepts from network science and random matrix theory on heavy-tailed behavior in trained networks.

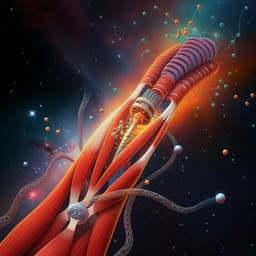

Physical model: A finite 2D square lattice (N=16×16 atoms) with random site displacements is modeled by a tight-binding Hamiltonian H = Σ_i ε a_i^† a_i + Σ_{i,j} t_{ij}(a_i^† a_j + h.c.), with near-field hopping t_{ij} = t0 exp(−a d_{ij}), where d_{ij} is the distance set by the deformed lattice; on-site energy ε set to 1 for spectra (does not affect mode areas). Localization metric: normalized mode area W_m = 1 / (N Σ_s (Ψ_{ms}^2)^2), the inverse of IPR, computed from eigenstates of H. Representation: Each disordered structure’s 2D atomic displacements are projected to x and y components (Δx, Δy) and encoded as two 16×16 grayscale images (two-color channels). Localization arrays of length N are reshaped to single-channel 16×16 images for inverse modeling. Datasets and deformation generation: Training data are generated via combined collective and individual atomic displacements: Δr_ix = ρ cos(u_i(0,2π)) + (−σ,+σ), Δr_iy = ρ sin(u_i(0,2π)) + (−σ,+σ), with ρ = ρ_max u(0,1), σ = σ_max u(0,1), and ρ_max = σ_max = 0.6. Distinct random seeds are used for training/validation/test sets. Datasets: D2L training 2×10^4 realizations; validation 1×10^4; test 1×10^4. D2L CNN (forward): Input two 16×16 channels; three Conv(3×3)-MaxPool stages with 256, 512, 1024 filters; zero-padding; ReLU activations; flatten to FC(2048) then N-neuron output for W_m. Optimization: Adam with decaying learning rate; mini-batch size 10; dropout 50% on FC to mitigate overfitting. Loss: mean absolute percentage error (MAPE) L_D2L = Σ_m |W_m^ML − W_m^True| / W_m^True. L2D CNN (inverse) and L2D2L training: L2D mirrors D2L architecture but takes the reshaped localization image as input and outputs 2N neurons reshaped to two 16×16 displacement images. To ensure physical validity, the pretrained D2L with fixed weights is cascaded after L2D, forming an L2D2L autoencoder for localization. Loss: L_L2D2L = Σ_m |W_m^Target − W_m^ML| / W_m^Target. Regularization: L2 (scale 0.05) applied in L2D to suppress large weights. Training uses distinct seeds for data splits. Hardware: two NVIDIA RTX 2080 Ti GPUs. Analysis: Microstructural statistics of atomic displacement magnitudes Δr_i = [(Δr_x)^2 + (Δr_y)^2]^{1/2} are compared between seed and ML-generated structures using maximum-likelihood power-law fitting with goodness-of-fit tests; complementary CDFs are evaluated; power-law exponent α and lower bound Δr_min are assessed over varying numbers of realizations. The relationship between network weights and generated microstructures is probed by analyzing the distribution of weight strengths W = [(w^x)^2 + (w^y)^2]^{1/2} from FC(2048) to output (512) in L2D; ablation with single-pooling-layer CNNs is used to test architectural effects. Robustness tests: apply randomized positional “attacks” to individual atoms (rx = rx0 + p_a cos u(0,2π), ry = ry0 + p_a sin u(0,2π)) and measure perturbations in mode areas ΔW_m and averaged normalized errors δ per atom to identify hub atoms.

- Forward prediction: The D2L CNN predicts localization from disorder with test accuracy 1 − L_D2L ≈ 94.80% across weak-to-strong disorder, closely matching ground-truth mode areas without solving eigenproblems.

- Inverse design: The L2D inverse model trained via the L2D2L scheme achieves test accuracy 1 − L_L2D2L ≈ 94.21% in reproducing target localizations through the cascade; when validating ML-generated structures directly by Hamiltonian, target vs true agreement is ~79.10%, with discrepancies concentrated in strongly localized, large-deformation regimes (extrapolation beyond D2L’s training domain).

- Emergent scale invariance: ML-generated structures that realize target localizations exhibit power-law, heavy-tailed distributions in atomic deformation magnitudes Δr, in contrast to normal-distributed seed structures. Example: complementary CDF fits a power law with exponent α ≈ 3.79 and lower bound Δr_min ≈ 0.432 for realizations with 0.20 ≤ ⟨W_avg⟩ ≤ 0.30; heavy tails persist across small to moderate sample sizes and across localization regimes. Alternative heavy-tailed fits (e.g., power law with cutoff, log-normal) confirm robustness of heavy-tail characterization.

- Architecture–structure link: The distribution of L2D’s output-layer weight strengths W from FC to outputs is itself heavy-tailed and mirrors the inflection/heavy-tail behavior seen in Δr, indicating that NN weight statistics shape the generated microstructure statistics. Ablation (reduced pooling) alters W distributions and correspondingly changes generated microstructures, while retaining heavy tails.

- Accuracy independence: Subsets with high (≥84%) and low (≤69%) test accuracies both display similar power-law Δr statistics, indicating scale invariance arises from network architecture rather than prediction errors.

- Robustness and hubs: Scale-free ML-generated structures show 2–4 orders of magnitude reduction in localization perturbations ΔW under random single-atom attacks compared to normal-random seeds, especially for highly localized modes. Hub atoms are observable: ML-generated structures exhibit non-democratic responses with localized high δ for specific atoms (hubs), whereas seed structures respond democratically (Erdős–Rényi-like). These behaviors align with fault-tolerant robustness to random failures and fragility to targeted attacks characteristic of scale-free systems.

The study demonstrates that deep CNNs can learn and invert the complex mapping between disordered microstructures and wave localization, and, crucially, that the inverse design process selects microstructures with scale-free, heavy-tailed statistics. This resolves the non-uniqueness of the inverse problem by biasing solutions according to the learned network’s weight distributions, thereby preserving target localization while altering other spectral properties. The observed heavy-tailed microstructural statistics correlate with heavy-tailed weight matrices reported in random matrix analyses of trained deep networks, providing a mechanism linking ML architecture to real-space material statistics. Consequently, ML-generated scale-free structures inherit network-science properties—robustness to random defects and sensitivity to targeted perturbations—improving stability of localization while enabling controllable modulation via hub atoms. The results suggest that tailoring architectures (pooling depth, FC-output connectivity) or adopting alternative models (U-net, transformers, attention) can systematically steer microstructural statistics and, thus, device robustness/sensitivity trade-offs. Graph-theoretic interpretations (e.g., isoperimetric parameters) further frame ML-induced changes as topology adjustments that conserve localization but tune other wave properties.

The work introduces a CNN-based framework to predict and inversely design disorder–localization relationships, revealing that ML inverse design naturally yields scale-invariant, heavy-tailed disordered microstructures. Forward D2L models provide near-instant localization prediction, while inverse L2D models generate structures achieving target localizations. The ML-induced scale-free statistics confer substantial robustness (2–4 orders of magnitude improvement) to random defects and highlight hub atoms’ role in targeted sensitivity. These insights open a path to architecturally controlling microstructural statistics via ML to realize wave materials with desired robustness/sensitivity profiles and to design devices in lasing, energy storage, and disordered bandgap materials. Future work includes expanding training domains to reduce extrapolation errors, exploring architectures (U-net, transformers, attention) to tune statistical exponents and structural families, integrating reinforcement/unsupervised learning for broader inverse design, and leveraging graph-theoretic properties to jointly optimize multiple wave quantities (e.g., localization and spectral characteristics).

- Non-uniqueness and architectural bias: The inverse problem admits many microstructures per target localization; the L2D solution is biased by network architecture and weight statistics, potentially limiting diversity of discovered structures.

- Extrapolation limits: Discrepancies arise in strongly localized regimes due to ML-generated deformations exceeding the D2L training range, constraining accuracy; broader training distributions and deeper models are needed.

- Imperfect scale-free behavior: Observed heavy tails are not perfectly scale-free; relationships among heavy-tailedness, exact scale-freeness, robustness, and modulation sensitivity require further quantitative study.

- Scope: Results are demonstrated on 2D square lattices with uncorrelated seed disorder; tunability of power-law exponent α and extension to correlated disorders or 3D systems remain to be established.

- Energy spectra: While localization is preserved, energy spectra can differ markedly between seed and ML-generated structures, which may matter for specific applications and requires joint-objective design.

- Data/code availability: Data and code are available upon request rather than fully open-sourced within the paper, potentially limiting immediate reproducibility.

Related Publications

Explore these studies to deepen your understanding of the subject.